From 3 to 7 with AWS Services

The OSI Reference Model is common ground for us tech geeks and tech enthusiasts alike, and it has become the authority in network proceedings and in cloud security divisions. This model divides these proceedings into different interconnected layers, each having its own class of functionalities, ranging from the transmission and reception of raw bit streams over a physical medium to delivering the final unpacked information to the end-user in the application.

In cloud security matters, the hardenization of layers 3 to 7 is paramount, and getting it right will prevent information from entering the network and possibly disrupting the architecture. However, there seems to be much disagreement about how to apply securitization best practices into these layers.

Luckily, AWS has a comprehensive array of services that works organically to readjust all the layers’ cloud security aspects. In this post, we will show some AWS services that will make securitization procedures in the OSI Model layers 3 to 7 easy to deal with.

Layers 3 and 4

AWS Network Firewall

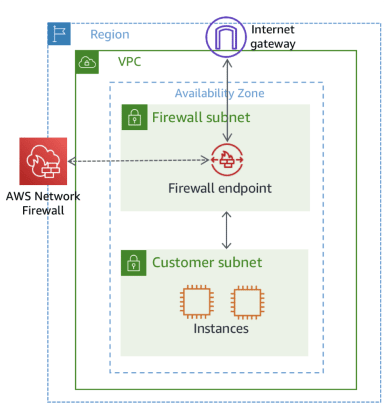

AWS Network Firewall is a managed cloud security service whose primary purpose is to facilitate the process of enabling pervasive network protections in our AWS workloads and VPCs. This top-notch service from AWS offers high availability protections. It scales automatically with network traffic, which is a great advantage as there is no need to revise or install products in the underlying infrastructure.

Apart from the default rules defined by AWS Network Firewall, it is possible to add different customizable rules to the organization’s security needs. These rules can be imported from reliable providers like the OISF (The Open Information Security Foundation), which developed a free, open-source tool that deploys granular network protection across the entire AWS environment.

The name of these rules is Suricata, a set of default rules that configures the firewall to allow or deny specific data into our company’s data center. This ensures that all the data received will not harm or cause vulnerabilities in our cloud system. This hardened security helps block or prevent unauthorized domains of known bad IP addresses into our VPCs, for example.

Commonly, an Internet gateway is assigned to a Route Table that connects with an application. If the company decides to implement AWS Network Firewall, some specific methods and strategies will need to be replanned within our networking standard stack, like creating a particular Route Table for the Internet gateway.

We can think of the server infrastructure as being a puzzle. That is, the user needs to make any necessary changes for all the pieces to fit perfectly. This modification or enlargement allows the Network Firewall to become the proxy between the connection from the Internet to our servers and the other way around.

Security Groups

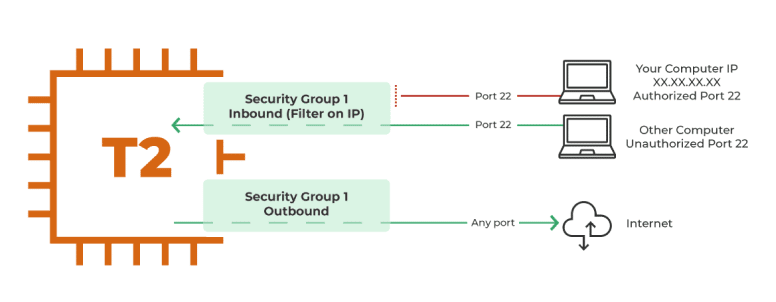

Security Groups are stateful, meaning each group has its own state. These groups’ configurations consist of two different kinds of rules: Inbound Rules y Outbound Rules. But before going further with this explanation, we want to comment on this topic.

With the Inbound Rules, the user defines the ports through which the traffic will enter. Every packet will enter and leave through the Inbound Rule (if so selected). The outbound rules mean that the sent or received information originates in the server and leaves the server through the outbound rule. When the data returns, it returns through the outbound rule.

Security groups are often misconfigured. How are yours doing?

Layers 5 and 6

AWS Session Manager

Securizing applications in private subnets are sometimes hard work. This is so because applications in private subnets require the creation of a Bastion in a public subnet. The Bastion allows applications to connect to the Internet because, by default, private subnets do not have internet access.

There are some methods to protect and securize the Bastion that take a lot of time to set up. Customers can configure the Bastion only to allow users to enter with a VPN. They can restrict Access Control List entrances to the Bastion, create restrictive security groups, and generate Route Tables specific to the apps in the private subnet, among others.

Luckily, AWS has come up with Session Manager. This service enables users to access all the instances with the SCO instead of having hardcored credentials. This allows the user to connect with the instance without such an instance having an internet connection. With this AWS Service, we get that additional abstraction layer that the server needs; that is, the user will enable or disable access through SCM.

AWS will take care of all the tunnelization and configuration of security and traffic within these private and public subnets. Users only need their AWS user and password and the public access key to connect to the different instances.

Load Balancer

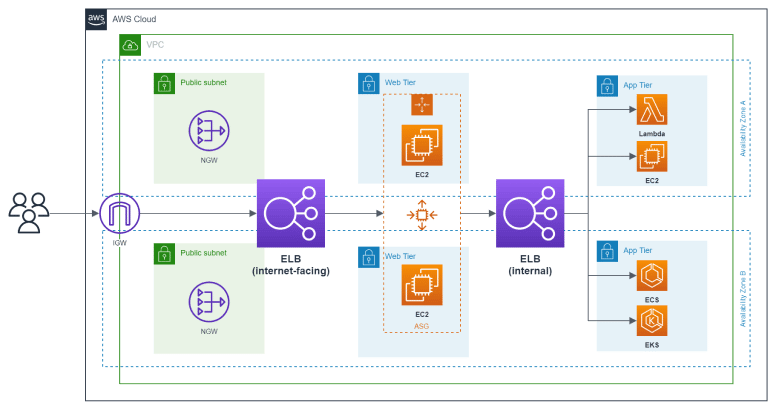

With an Elastic Load Balancer, you can balance the traffic in an application. A Load Balancer can redirect the traffic into the different services or EC2 instances to relieve the routes from overloading in a multi-server architecture that receives quite a traffic load. Likewise, if there are several routes within the same or different servers, the Load Balancer can decide which will be each route’s destination. This means that users will drastically reduce loss by throttling as traffic gets constantly distributed.

Load Balancer can work in tandem with other AWS services to further secure the architecture against DDoS attacks. These cloud security services can identify, limit, and balance the traffic between the servers and the different resources. If you receive a DDoS attack or somebody is trying to access non-existent directories with non-operating gears, these services will block these requests or balance them.

Lastly, suppose there is an application in a private database layer. In that case, a load balancer can be located in the public layer. Within this public layer, it redirects traffic from the Internet to the application. This allows the instance to exit the application through the load balancer, thus preventing the server’s location in a public layer while granting an internet connection in the private layer.

Network Access Control List (NaCLs)

AWS Network Access Control List is a set of rules that either allow or deny access in a network environment. They apply to the whole layer (whether public or private). Unlike Security Groups, which can only allow but not deny access, NACLs are much more explicit as they can deny access to the network layer to one or several instances.

The explicitness of NACLs applies both to inbound and outbound rules. This means that the NACL can allow or deny access through both rules. As regards inbound rules, for example, they can be set up for instances to get into the network layer from IP n to IP n or from IP range n to IP range n in connection to HTTP, HTTPS, or SSH protocols as applicable. The same applies to outbound rules. Basically, NACLs need explicit configuration to allow or deny access in and out; otherwise, it will not work correctly.

Let’s delve into NACLs and Security Groups at play with a practical example to study how they work together hierarchically. The NACL is set up to allow instances into my network layer through port 443 to a sider IP, but the Security Groups are not set up to allow instances from port 443 in; therefore, the connection gets lost. Hierarchically, instances first encounter with NACLs as they work at the subnet layer, and then they face Security Groups if NACLs allow them.

Layer 7

Web Application Firewall (WAF)

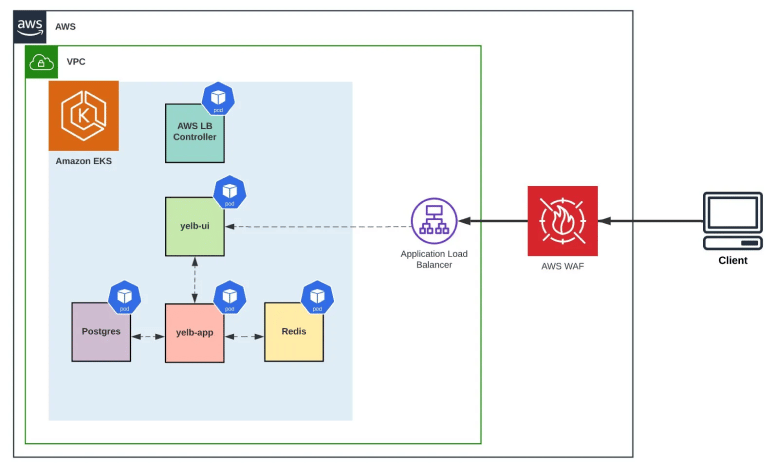

At the application layer, AWS WAF comes in handy to define the rules governing the traffic to our application. The AWS WAF can work in tandem with different AWS services like AWS ALB or AWS CloudFront.

In an application load balancer, the WAF will define rules groups, like cross-sight encrypting or SQL injection, before letting this traffic into the application. These rules can enter into three modes: count, block or allow. Rules in counting mode will verify matches, let the traffic in and create a log afterward. Rules in allow mode will allow all traffic if there is a match, and rules in block mode will block all requests if there is a match.

Another AWS service that adds an additional nuance of protection to our application layer is AWS Shield. In its standard version, this service defends against the most common and frequently occurring network and transport layer DDoS attacks that target the customers’ website or applications. But in its advanced version, AWS Shield Advanced, the cloud security service offers protection against layer 7 DDoS attacks, including features like Tailored detection based on application traffic patterns, Health-based detection, Advanced attack mitigation, and Automatic application layer DDoS mitigation, among others.

Conclusion

Understanding how hardenizing our layers is paramount to keeping our cloud systems well-secured, and AWS can cater to the security needs from layers 3 to 7. This post aims to give developers some security best practices to get the most out of your cloud system’s potential.

However, it should be noted that this list is not exhaustive, and the deployment of these services and configurations requires technical expertise. DinoCloud is a Premier AWS Partner with a team of experts that can help you achieve safer cloud deployments. Get started by clicking here to talk to one of our representatives.

LinkedIn: https://www.linkedin.com/company/dinocloud

Twitter: https://twitter.com/dinocloud_

Instagram: @dinocloud_

Youtube: https://www.youtube.com/c/DinoCloudConsulting